Schumpeter's Creative Destruction in Software

Survival of the Fittest: The new AI Environment is forcing Evolutionary Change

ATTRIBUTION: This article was, in part, inspired by a post published by Nicolas Bustamante. I treat social media very much like the town square in Ancient Greece. Lots of conversations, debates and information floating around. We formulate our own opinions based on what we learn - mostly from others - then share those thoughts for others to build upon.

Is AI Eating Software Businesses?

The software and tech sector has hit turbulence. Hard.

That turbulence has done two things at once. It has exposed businesses that were trading on over inflated multiples, but it has also indiscriminately dragged down some genuinely exceptional companies.

When that happens, opportunity follows for investors.

Nearly a trillion dollars has been wiped from software stocks in recent weeks. Names that once looked untouchable have been cut down to size. FactSet (NYSE: FDS) fell from a $20 billion peak to under $8 billion. S&P Global (NYSE: SPGI) dropped 30% in a matter of weeks. Thomson Reuters (NASDAQ: TRI) lost nearly half its market cap over the past year. The broader S&P 500 Software & Services Index is down roughly 20% year to date.

That’s not normal volatility. That’s repricing.

And the repricing is being driven by fear of a structural shift the market doesn’t yet fully understand.

Large language models are perceived to be dismantling the moats that made many software companies so defensible and so profitable. If AI can replicate core functionality at near-zero marginal cost, what happens to pricing power? What happens to retention? What happens to the entire vertical software model?

That’s the anxiety. But anxiety and reality are not the same thing.

To understand what’s actually at risk, we need to be precise about what kind of software we’re talking about.

Vertical software is built for a specific industry. It embeds itself in workflows, regulation, and data that are unique to that domain. Think Bloomberg L.P. in finance. LexisNexis (NYSE: RELX) in legal. Epic Systems in healthcare. Procore (NYSE: PCOR) in construction. Veeva Systems (NYSE: VEEV) in life sciences.

These businesses share common economic characteristics.

They charge a lot. Bloomberg terminals run roughly $25,000 per seat. FactSet (NYSE: FDS) can cost $15,000 or more per user annually. Customers rarely leave. Retention rates hover around 95%. Switching costs are real. Workflows are embedded. Data is proprietary. Compliance risk is high.

Those unit economics are not accidental. They are the output of durable moats: proprietary datasets, workflow integration, regulatory complexity, network effects inside industries, and high switching costs.

The market is now asking a simple question.

Have those moats been breached?

LLMs are attacking certain layers of the stack head-on. Summarisation. Search. Basic analysis. Content generation. These were once labour-intensive, high-margin features bundled into expensive software subscriptions.

But other layers look far more resilient. Proprietary data. Embedded workflows. System-of-record infrastructure. Compliance-grade audit trails. Deep integration into client operations.

The future of the sector hinges on distinguishing between the two.

If AI commoditises the surface layer while leaving the core intact, margins compress but franchises survive. If AI meaningfully erodes the underlying data and workflow advantages, then the model itself is impaired.

The sell-off suggests the market is pricing in broad erosion.

The opportunity, as always, lies in being more precise.

Five Moats Under Attack

Start with the learned interface. For years, the interface itself was a massive switching cost. A Bloomberg user speaks the Bloomberg language: keyboard shortcuts, function codes, navigation patterns. Years of using these tools creates familiarity, becoming fluent in Bloomberg is a great way to describe it. Switching tools meant becoming illiterate again. That friction justified premium pricing. But LLMs remove this switching cost. They collapse proprietary interfaces into a single natural language chat interface. Instead of memorizing commands, users type what they want in plain English. Years of training become irrelevant. The switching cost dissolves.

Next is custom workflow and business logic. Vertical software captured how an industry actually works, programmed into thousands of lines of hard code. Legal research systems embedded citational logic and court hierarchies. Financial platforms encoded DCF methodologies and compliance checks. Updating it meant very long development cycles, followed by quality assurance and then deployment.

Now? That logic can live in a markdown file. Domain experts can describe their methodology in plain English, and the LLM executes it. What once required years of engineering can be written in a week. Business logic migrates from code to text. It becomes readable, auditable, customizable. The workflow moat erodes rapidly.

Talent scarcity used to be another barrier. You needed software engineers who understood credit derivatives or legal precedent. Those people were rare. Hiring was brutal. LLMs flip this dynamic. Domain experts can now translate their expertise directly into working software via prompts and skill files. Engineering bottlenecks shrink. The scarce resource, domain knowledge, suddenly becomes abundant. Barriers to entry collapse.

Then there’s public data access. Much of vertical software’s value was making messy public data usable. Parsing SEC filings. Structuring case law. Extracting meaning from inconsistent formats. Companies built massive parsing infrastructure to turn raw data into searchable systems. LLMs now do that natively. Feed a 10-K to a frontier model and it understands the structure, terminology and accounting distinctions. The model is the parser. The “making it searchable” layer, once a major competitive advantage, is increasingly commoditized.

Bundling also weakens. Companies like Bloomberg expanded by layering adjacent modules: data, analytics, messaging, trading. The more modules you used, the harder it was to leave. But LLM agents act as orchestrators. Alerts, screening and analytics all become prompts. The agent integrates across multiple providers. Why pay for an expensive bundle when an AI agent can stitch together best-of-breed tools dynamically? The integration layer shifts from vendor to agent.

Five Resilient Moats

Private and proprietary data may actually become more valuable. Real-time pricing data collected from trading desks. Regulated credit ratings backed by decades of default history. Exclusive content libraries. If the data genuinely cannot be replicated, scraped, or licensed elsewhere, LLMs amplify its importance. In an AI world, proprietary data becomes the scarce input every agent needs. The companies that own truly unique data may see their leverage increase.

Regulatory and compliance lock-in is another structural moat. Healthcare EHR systems like Epic aren’t defensible because of their interface, they’re defensible because of HIPAA compliance, FDA validation, and 18-month implementation cycles. Financial compliance infrastructure is similar. Regulations don’t evaporate because GPT-5 exists. In fact, heavy regulation may slow LLM adoption in exactly these verticals.

Network effects also remain sticky. Bloomberg’s messaging system works because everyone on Wall Street uses it. The value comes from who else is on the platform, not the interface. LLMs don’t break that. If anything, communication networks may become more valuable as context and signal grow.

Transaction embedding is durable too. Payment processors, loan origination systems and claims processing platforms all sit directly in the money flow. Switching them interrupts revenue. LLMs might sit on top as a better interface, but the transaction rails themselves remain essential.

System of record status is more nuanced. Today, being the canonical source of truth creates enormous switching costs. But agents are building their own memory layers. They read across email, documents and CRM systems. Over time, the agent accumulates a richer contextual record than any single system. It doesn’t replace systems of record overnight, but directionally, it weakens their exclusivity.

Impacts on Valuation

The value of a business is directly correlated to the revenue that it generates and its margins. Historically, vertical software felt like infrastructure. You bought Bloomberg the way you bought electricity.

For decades, vertical software companies perfected one beautifully simple monetization model: per-seat pricing. Charge per user, per year, at a premium. The math was elegant. The more employees you hired, the more revenue the vendor earned. Growth inside the customer was automatic.

That model made sense in a world where software was tightly coupled to a human operator. One trained professional. One terminal. One license. The value was in the seat.

LLMs break that symmetry. Per-seat pricing was sustained by interface lock-in and workflow complexity. LLMs weaken both.

When the interface becomes an AI agent instead of a trained operator, the concept of a “seat” starts to wobble. Because what exactly are you charging for? The human? The interface? The output? The compute?

In the old world, the seat represented three things bundled together: access to data, access to workflow tools, and access to a learned interface. A trained analyst sat in front of a Bloomberg Terminal and manually navigated workflows. The seat price reflected the fact that this was a skilled human interacting with a complex system.

But in an AI-native world, that skilled human is increasingly delegating the heavy lifting to an AI agent.

Instead of ten analysts each using the software, now you might have two analysts overseeing AI agents that do 80% of the mechanical work. The marginal cost of “another seat” collapses, because the number of seats is now in decline.

Per-seat pricing assumes a linear relationship between humans and output. LLMs make output nonlinear. If the work is being performed by an AI layer that can operate continuously, asynchronously, and at scale, charging per human seat starts to look mismatched to how value is created.

There’s also a second-order effect. In a chat-based world, access becomes fluid. The friction of learning the interface disappears. Suddenly, junior employees, adjacent teams, or even non-specialists can query complex datasets without formal training. Instead of paying $25,000 for a trained power user, a firm may expect broader, lighter access at a much lower marginal cost.

That creates downward pressure on average revenue per user.

This is where the per-seat model collides with reality.

Vertical software vendors have historically defended per-seat pricing by pointing to ROI. Bloomberg can argue, “If this analyst generates $5 million in alpha, what’s $25,000?” That logic holds when the product directly augments a specific human. But when AI automates the workflow itself, the conversation shifts from per-user productivity to system-level output.

Customers begin asking a different question: not “How many seats do I need?” but “How much value does this system generate?”

The answer to this question will vary enormously from company to company, so formulating a standardized fee structure in the new world is far from easy.

That naturally pushes pricing toward usage-based or outcome-based models. We’re already seeing early signs of this shift. AI-native tools often charge per query, per token, per workflow run, or per volume of data processed.

That evolution creates tension for incumbents.

Per-seat pricing is beautiful because it’s predictable. It scales with headcount. It supports high gross margins and long-term contracts. Usage-based pricing is more volatile. It’s harder to forecast. It can be optimized by customers.

If an AI agent can route queries across multiple data providers, and each provider charges per API call, the agent will optimize for cost. That drives competition to the marginal cost of a query level and the premium embedded in per-seat contracts gets exposed. Price compression may be inevitable.

Aggregation theory starts to bite when the agent owns the user relationship. The AI agent is the interface. The agent becomes the brand. Data providers compete underneath it. In that world, per-seat pricing for underlying vendors looks fragile. The aggregator captures margin; data suppliers compete on cost.

Of course, this doesn’t mean per-seat pricing disappears overnight.

Enterprise contracts are sticky. Procurement departments like simplicity. Budgeting per headcount is administratively easy. Many customers will continue paying per seat simply because that’s how the contracts are structured today and the middle management have little or no incentive to change. Inertia is a real factor.

But directionally, the gravity is shifting.

Over time, we’re likely to see a few evolutionary paths.

First, hybrid models. A lower base subscription plus usage-based overages tied to AI-driven workflows. The seat becomes a platform access fee; the real revenue comes from execution.

Second, team-based or enterprise-wide licensing that detaches pricing from individual users. Instead of 50 seats, you buy “AI access for the investment team,” with pricing tied to assets under management or firm size as a distinct possibility.

Third, outcome-linked pricing in certain verticals. If software directly processes transactions, originates loans, settles claims, or drives measurable financial output, vendors may anchor pricing to throughput or value created rather than headcount.

And finally, in high-risk categories, particularly those built primarily on searchable public data, the brutal possibility: pricing compression. When dozens of AI-native competitors can deliver 80% of functionality at 20% of the cost, the market resets the reference point. Per-seat premiums become harder to defend.

The vertical software companies that survive won’t just defend their seat count. They’ll have to rethink the unit of value entirely. Value shifts from access to execution. From seats to systems. From tools to outcomes. In an AI-first world, the question isn’t “How many people are using this?” It’s “How much work is this system doing?”

Conclusion

Add it all up and the net effect is dramatic. Five major moats are destroyed or weakened. The remaining moats only apply to some incumbents. Pricing structures are crumbling.

Importantly, this is not an overnight revenue collapse. Enterprise contracts are multi-year. Procurement cycles take time. Revenue cliffs are slopes. But stock prices move on expectations. A company trading at 20x earnings because of assumed pricing power may compress to 10x if that moat looks weaker. Even if revenue hasn’t fallen yet, multiple compression is what we’re seeing.

When multiples contract independently of fundamentals, that’s pure market psychology at play. That’s where the best investment opportunities often lurk. Psychological arbitrage isn’t discussed nearly as much as it ought to be. Benjamin Graham’s parable of Mr Market sums it up perfectly.

So how do you assess risk?

A fear has gripped the market based on the premise that AI will enable anyone and everyone to build their own applications cheaply and easily. People worry that enterprise software will be disrupted and displaced.

I totally understand the “democratization” argument, but it overlooks the fact that writing code is only about 10% of the software lifecycle.

In an enterprise environment, the value of a platform isn’t only the UI, it’s the infrastructure and accountability that come with it. Dedicated software suppliers don’t merely create an app, they create a long-term liability. Without professional version control, security patching, and managed data storage, new apps lacks the encryption, compliance, and disaster recovery protocols required to protect company data.

Said differently, enterprise software provides a guaranteed service level that AI-generated code simply can’t. When a mission-critical system fails, you need an SLA and a support team to ensure business continuity. You can’t AI prompt your way out of a broken database or a security breach at 2:00 AM.

Then there is a nuance that the market seems to be missing. Vertical software companies, such as Constellation Software (TSE: CSU) and Topicus (TSE: TOI), have entirely different commercial dynamics to horizontal software businesses, such as Salesforce (NYSE: CRM).

Vertical software businesses don’t only sell functionality, they sell trust. Over decades, their products become the distillation of thousands of conversations with customers: feature requests, regulatory updates, edge cases, workflow tweaks. Unlike horizontal software, which is built broadly and then sold widely, vertical software is often shaped alongside its customers in a deeply collaborative way. As Mark Leonard of Constellation Software put it, “vertical market software is the result of a conversation between vendor and customer that has gone on for decades. That accumulated dialogue gets embedded in the product itself.”

This creates a different kind of stickiness. The software reflects how a specific industry actually operates, including all its messy exceptions and compliance requirements. Switching vendors isn’t only a matter of migrating data, it also means abandoning years of shared understanding, retraining teams, and risking operational disruption. In regulated or mission-critical environments, reliability and continuity matter more than shiny new features, and that entrenches incumbents.

So, while AI may make it easy for others to write new software, it also makes it easier and cheaper for vertical software incumbents to upgrade entrenched software. Given the very high switching costs, this arguably strengthens their moat, it doesn’t undermine it.

Additionally, the strongest software companies don’t just manage workflows. Consider Adyen (AMS: ADYEN / ADR: ADYEY) as an example. They provide ancillary value added services. The moment a platform integrates payments, lending, payroll or embedded finance, it moves from being a productivity tool to being mission-critical infrastructure, and that’s where the real defensibility lies. When ancillary services are embedded, the economics change completely. Revenue no longer depends solely on subscription fees; it scales with transaction volume. As customers grow, spend more, or process more payments, the platform participates directly in that upside. Subscription revenue can be renegotiated. Seats can be reduced. But ripping out the system that processes your payments or facilitates customer financing is far less likely to occur.

Ask yourself these questions. Is the data truly proprietary? Is there regulatory lock-in? Is the software embedded in the workflow of the customer? Is the cost of the software low relative to the value it delivers? If the answer to all four is no, the company is high risk. If two or more are yes, it’s likely safer.

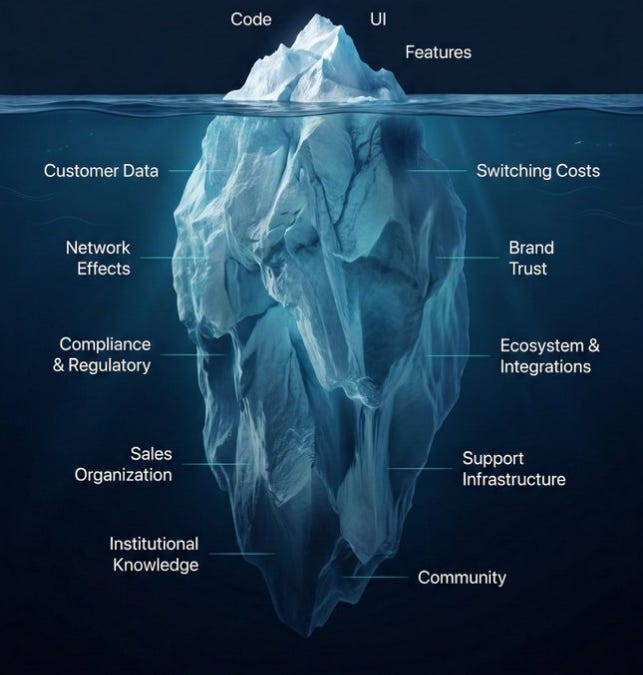

Ultimately, think of software like an iceberg. AI may threaten the part that can be seen above the water, but there is so much value beneath the surface that isn’t so easy to displace.

Companies that have sought to capture most of the value created by their vertical software, as evidenced by very high margins, may be more vulnerable. As Jeff Bezos used to say, ‘Your margin is my opportunity’. But companies that have grown by deploying the principle of scale economics shared are likely to survive largely unscathed. It isn’t so easy for a newcomer without scale to compete, even with an AI coded application.

The vertical SaaS sell off is simple to understand, but too simplistic in how it has been interpreted by the market. It isn’t about every SaaS company dying. It’s about differentiation finally mattering.

The market selloff may be temporally exaggerated, but directionally it makes sense. The era when interface lock-in and workflow scaffolding justified premium multiples is ending. In an AI-first world, what matters is genuine scarcity: unique data, regulatory entrenchment, embedded infrastructure and high quality service.

Everything else is just a feature and features are easier to replicate than ever.

“When the tide recedes even the strongest ships settle lower. Weak vessels are revealed for what they are and will never sail again, but many are merely enduring the ebb and flow of the water, their strength unchanged beneath the surface, simply waiting for deeper waters to return.“

Some software businesses deserve their drawdowns. The fundamentals deteriorated. Expectations were absurd. Gravity did the rest.

But markets rarely stop at precision. The fear that AI will indiscriminately disrupt every technology company has triggered something broader — a compression of valuations across the board. Strong franchises have been repriced alongside the structurally impaired.

That’s where opportunity lives.

When uncertainty peaks, price often detaches from durability. Businesses with real moats, pricing power, and embedded distribution are being treated as if their economics are permanently broken. Many aren’t. They’re adapting. Some will even emerge stronger.

These windows don’t open often.

Panic is indiscriminate. Recovery is selective. Invest wisely! I have dropped some breadcrumbs in this article which may offer some inspiration. These are not recommendations to buy or sell any particular security, this post is merely designed as foundational educational material to help guide you through the noise of the market, and - disclaimer - I do own some of the names mentioned.

This is truly excellent & very helpful.